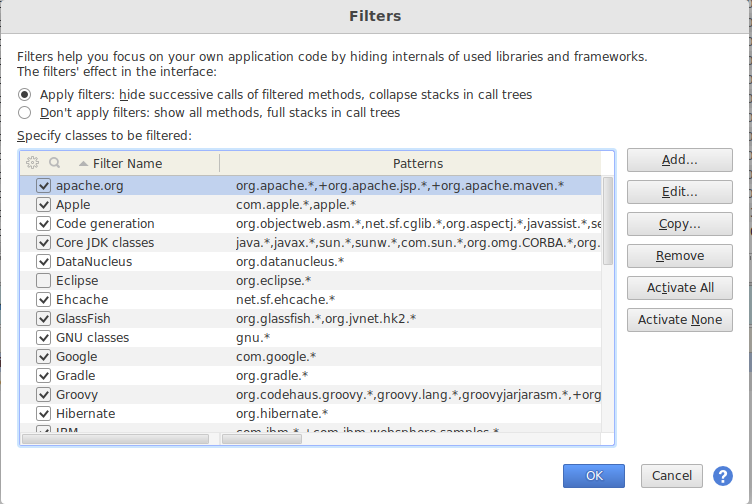

For example, if developing for Hadoop 2.7 with YARN support,Įnable profiles yarn and hadoop-2.7. The same profiles that are enabled with -P above may be enabled on the Some build configurations require specific profiles to beĮnabled. To enable “Import Maven projects automatically”, since changes to the project structure willĪutomatically update the IntelliJ project. In the Import wizard, it’s fine to leave settings at their default.Go to File -> Import Project, locate the spark source directory, and select “Maven Project”.You can get the community edition for free (Apache committers can getįree IntelliJ Ultimate Edition licenses) and install the JetBrains Scala plugin from Preferences > Plugins. While many of the Spark developers use SBT or Maven on the command line, the most common IDE we For more information, see scalafmt documentation, but use the existing script not a locally installed version of scalafmt. Spark Git repository, use the following command:īy default, this script will format files that differ from git master. This is useful when reviewing code or testing patches locally. Git provides a mechanism for fetching remote pull requests into your own local repository. You will have to add back in order to maintain binary compatibility. Self-explanatory and revolve around missing members (methods or fields) that Usually, the problems reported by MiMa are Otherwise, you will have to resolve those incompatibilies before opening or JIRA number of the issue you’re working on as well as its title.įor the problem described above, we might add the following: // Fix an issue ProblemFilters. Non-user facing API), you can filter them out by adding an exclusion inĬontaining what was suggested by the MiMa report and a comment containing the the reported binary incompatibilities are about a If you believe that your binary incompatibilies are justified or that MiMa Test build #xx has finished for PR yy at commit ffffff. If the following error occurs when running ScalaTest Go to your root directory of Apache Spark repository, and unzip/untar the downloaded files which will update the benchmark results with appropriately locating the files to update.Once a “Run benchmarks” workflow is finished, click the workflow and download benchmarks results at “Artifacts”.It is particularly useful to work around the time limits of workflow and jobs in GitHub Actions. Number of job splits: it splits the benchmark jobs into the specified number, and runs them in parallel.When false, it runs all whether it fails or not. Failfast: indicates if you want to stop the benchmark and workflow when it fails.JDK version: Java version you want to run the benchmark with.Benchmark class: the benchmark class which you wish to run.Click the “Run workflow” button and enter the fields appropriately as below:.Select the “Run benchmarks” workflow in the “All workflows” list.Click the “Actions” tab in your forked repository.

When you update the benchmark results in a pull request, it is recommended to use GitHub Actions to run and generate the benchmark results in order to run them on the environment as same as possible. Running benchmarks in your forked repositoryĪpache Spark repository provides an easy way to run benchmarks in GitHub Actions. Apache Spark repository provides several GitHub Actions workflows for developers to run before creating a pull request.

Please refer the integration test documentation for the detail.Īpache Spark leverages GitHub Actions that enables continuous integration and a wide range of automation. For example, Volcano batch scheduler integration test should be done manually. Testing K8SĪlthough GitHub Action provide both K8s unit test and integration test coverage, you can run it locally. Please check other available options via python/run-tests -help. You can check the coverage report visually by HTMLs under /./spark/python/test_coverage/htmlcov. Generating HTML files for PySpark coverage under /./spark/python/test_coverage/htmlcov $ python/run-tests-with-coverage -testnames _arrow -python-executables=python When developing locally, it is possible to createĪn assembly jar including all of Spark’s dependencies and then re-package only Spark itself This canīe cumbersome when doing iterative development. Spark’s default build strategy is to assemble a jar including all of its dependencies. Useful developer tools Reducing build times SBT: Avoiding re-creating the assembly JAR Java/Scala/Python/R unit tests with Java 17/Scala 2.12/SBT.Scaleway provides the following on MacOS and Apple Silicon. R unit tests with Java 8/Scala 2.12/SBT.Daily Java/Scala/Python/R unit tests with Java 11/17 and Scala 2.12/SBTĪppVeyor provides the following on Windows.Java/Scala/Python/R unit tests with Java 8/Scala 2.12/SBT.GitHub Action provides the following on Ubuntu 20.04. Apache Spark community uses various resources to maintain the community test coverage.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed